This post is the second in a series I am writing about how to use pen test data in security metrics to analyze and improve your application security program.

In my first post, I talked about the benefits of AppSec metrics and described a couple of different categories for pentest metrics -

-

Program level metrics, which can be used to analyze the overall pentest setup for an organization and make strategic decisions, and

-

Engagement level metrics, which can be tied to an individual pentest engagement to determine how well it is performing.

Here, I plan to discuss several examples from each group in more detail.

Pentest Program Level Metrics

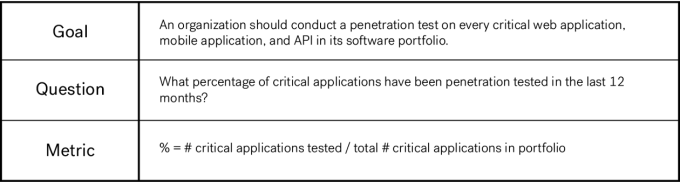

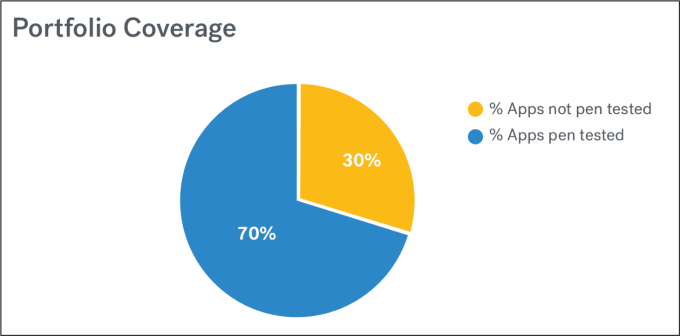

Portfolio Coverage: An organization should apply security controls in a risk-based manner across its entire software portfolio.

When it comes to penetration testing, the term “coverage” can mean a variety of different things. This same word may be used to represent coverage across an application portfolio (how many apps have been tested out of a total number of apps), indicate exploration within a single application (using a checklist approach to document what has been tested, such as the OWASP Top 10 or ASVS), or specify how much data about the application has been shared with the tester (white box, grey box, black box).

Whenever you’re using metrics (fitness, security, or otherwise), it’s important to clarify the specific context that you’re talking about.

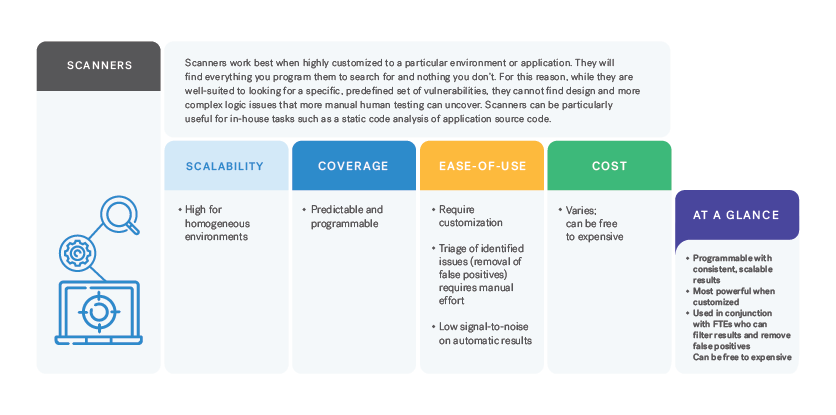

Here, I’m talking about coverage across an application portfolio. Ideally, an organization should apply security controls in a risk-based manner across its entire software portfolio. That might mean performing 3rd party penetration tests on the critical applications and running a scanner on the rest. Due to limited resources for application security testing and a software portfolio consisting of applications of varying risk levels, application security controls are not one-size-fits all.

Let’s say an organization has decided to conduct penetration testing on all critical customer-facing applications. If that organization has ten critical customer-facing applications in its software portfolio, then its goal should be to pentest all ten of these applications.

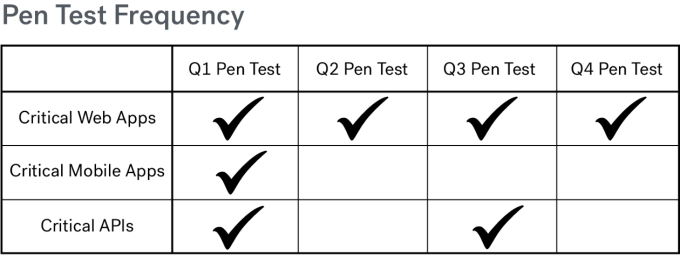

Pentest Frequency: An organization should conduct a penetration test on critical applications once a quarter.

Many regulations, such as PCI DSS, SOX, and HIPAA require an annual penetration test from a third party. I look at the annual compliance requirement as the bare minimum that an organization should do for critical applications. Security savvy organizations know that as time goes on and code changes (and/or requires patches to stay up to date), that semi-annual or quarterly penetration tests are a much better idea.

Attackers are constantly evolving the way that they attempt to breach applications. In order to stay one step ahead, penetration testing should be conducted periodically on an application, even if no recent changes have been made. New vulnerabilities are discovered all the time, and an application may be vulnerable to attack if software updates have not been installed.

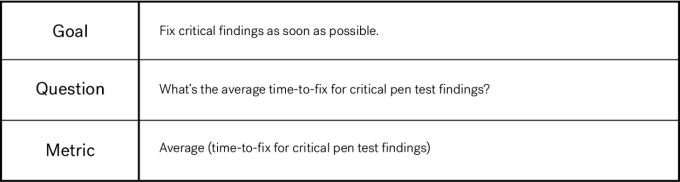

Time to Fix: Critical findings should be fixed as soon as possible.

Finding is important, but fixing is what actually matters. It’s easy to say that we want to fix all the defects that have been found, however it’s not that easy to make it happen. Developers are focused on developing new features and meeting deadlines, and have limited bandwidth to remediate security issues. It’s certainly not possible to fix all the security defects at once. They have to be prioritized and addressed over time. What kinds of metrics can be used to help with prioritizing which defects should get fixed, when?

Many organizations require that findings be fixed within a certain period of time, depending on the criticality of the finding. For example, an ecommerce business might require that critical findings discovered on its customer facing applications be fixed within 48 hours, high severity findings be fixed within 10 days, medium severity findings be fixed within 30 days, and low severity findings be fixed within 90 days.

Deciding exactly when to identify the “start time” and “end time” for this metric can be tricky. Does the clock start ticking when the finding is delivered by the researcher to the organization? Or is it when the finding is communicated to a developer would could actually make the fix? Also, when is a finding considered “fixed”? Is it when a developer says it is, and closes the associated JIRA ticket? Or does a “fixed” status require a re-test and verification from a security team member or from the researcher who originally found it? It matters less which timestamps you choose and more that you are specific and consistent about it when gathering data and calculating the metric.

Pentest Engagement Level Metrics

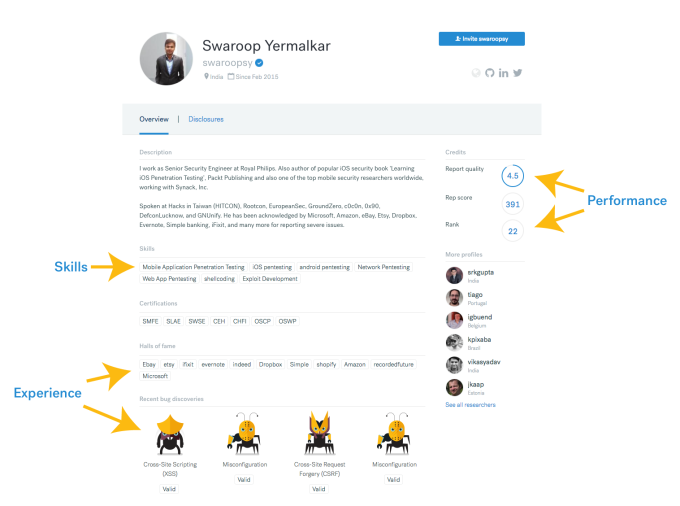

Talent Ratings: The most important attributes of any security researcher are their skill set, experience, and performance.

Talented security researchers are an essential component to achieving quality penetration testing results. A talented security researcher knows how to do much more than run an automated scan. He or she can think creatively (even maliciously) in order to mimic the attacker scenario against an application.

You want the security researchers who are pentesting your applications to have skills that are matched to your application’s technology stack. You want them to have many years of professional experience conducting security tests. And you want them to be highly rated by their team members and clients on their past performance.

Talent Ratings

It can be difficult to evaluate the quality or talent of a more traditional penetration tester, but crowdsourced security platforms make it easy. They provide this kind of information in a Hall of Fame and by displaying scores on researcher profiles.

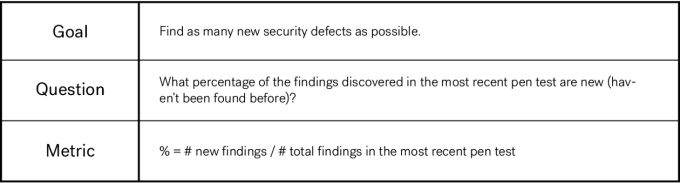

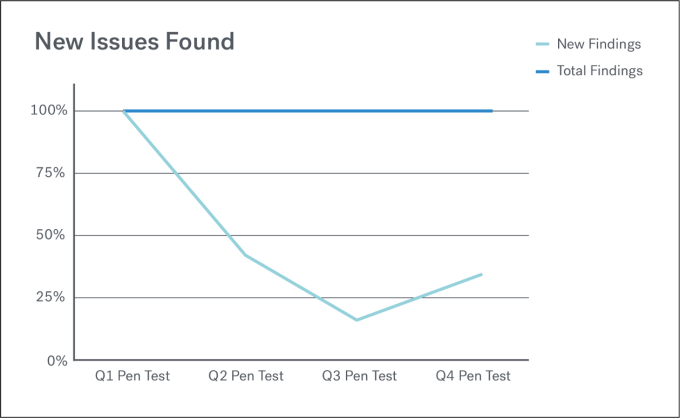

New Issues Found: Ideally, you want to find all the security bugs.

It’s impossible to know definitively if all existing security issues have been discovered in a given security test. However, an indicator that can be counted and tracked is the number of new findings which were discovered in the most recent penetration test.

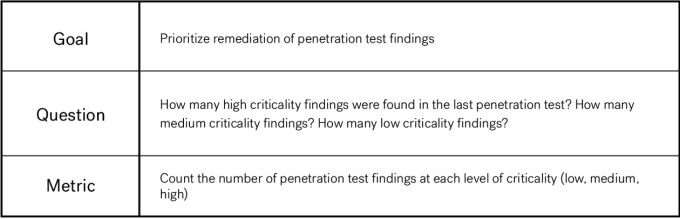

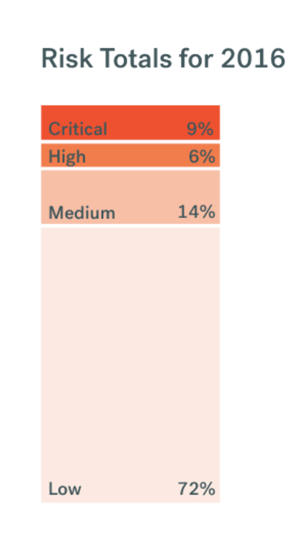

Findings Criticality: Some findings are more critical than others.

The criticality of a penetration test finding can be calculated by considering the potential impact to the business as well as the likelihood of occurrence. Higher criticality security findings should be remediated before lower criticality security findings, especially those that might be easily exploited.

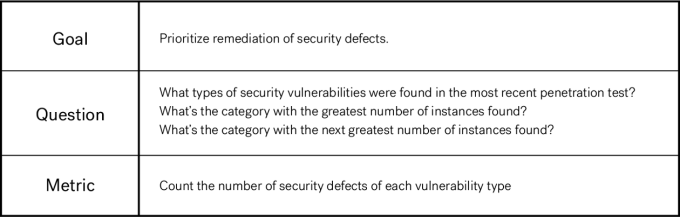

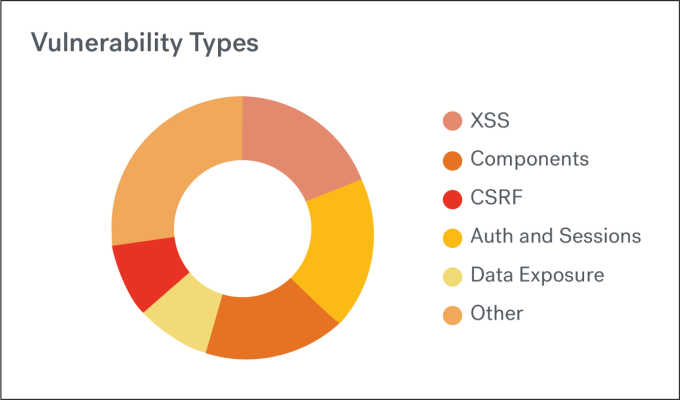

Vulnerability Types: There are many different types of security vulnerabilities.

By visualizing and analyzing how many instances of each vulnerability type have been found in a penetration test, an organization can begin to strategically eliminate certain types of vulnerabilities by focusing prevention strategies on a particular vulnerability type. The OWASP Top 10 contains a list of common web application security risks, however each organization will have its own unique “Top 10” list. If you know what yours is, you can use this information to eliminate entire categories of security vulnerabilities by putting into place focused developer training, writing custom static code analysis rules, integrating tests for these types of security vulnerabilities into QA testing, etc.

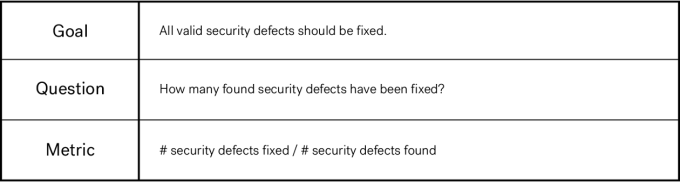

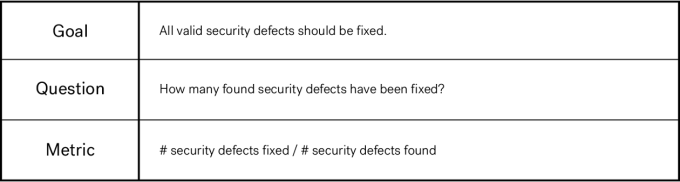

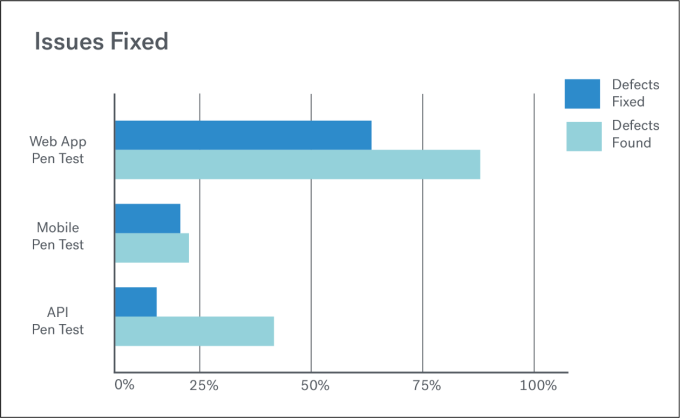

Issues Fixed: Finding is great, but fixing is what actually improves application security.

An organization should track how many issues found in each pentest actually got fixed.

In a recent blog post, where I compare different application security testing options and suggest evaluation criteria to use in order to determine which options to use for an organization’s particular situation, I make kind of a big deal about how it’s all well and good to find security defects, but fixing them is what actually improves the security of an application.

“Once you’ve performed defect discovery in order to find as many true positives as possible, the next step — by no means a trivial one — is to communicate them to the developer team, get them to prioritize the fixes, get them to remediate the issues, and ideally prevent the same issues from coming up again. Fixing security issues is not a technology problem; people and process are also required to get it done.”

It’s important to measure the effectiveness of a penetration testing capability — and by effectiveness, what I mean is how the penetration testing results actually improved the security of the application code. How many of the found security defects have actually been fixed?

Overall, it’s most important to choose the security metrics which will give you the information you want to determine if you’re meeting your application security objectives.