SANS AI Survey 2024

Explore the current state of AI adoption for cybersecurity and discover insights into how various organizations manage and minimize the risks of AI shortfalls with the SANS 2024 AI Survey.

Don’t wait for an attack to secure your applications—find and fix flaws earlier with repeatable, expert-driven pentesting with the team that pioneered pentest as a service (PtaaS).

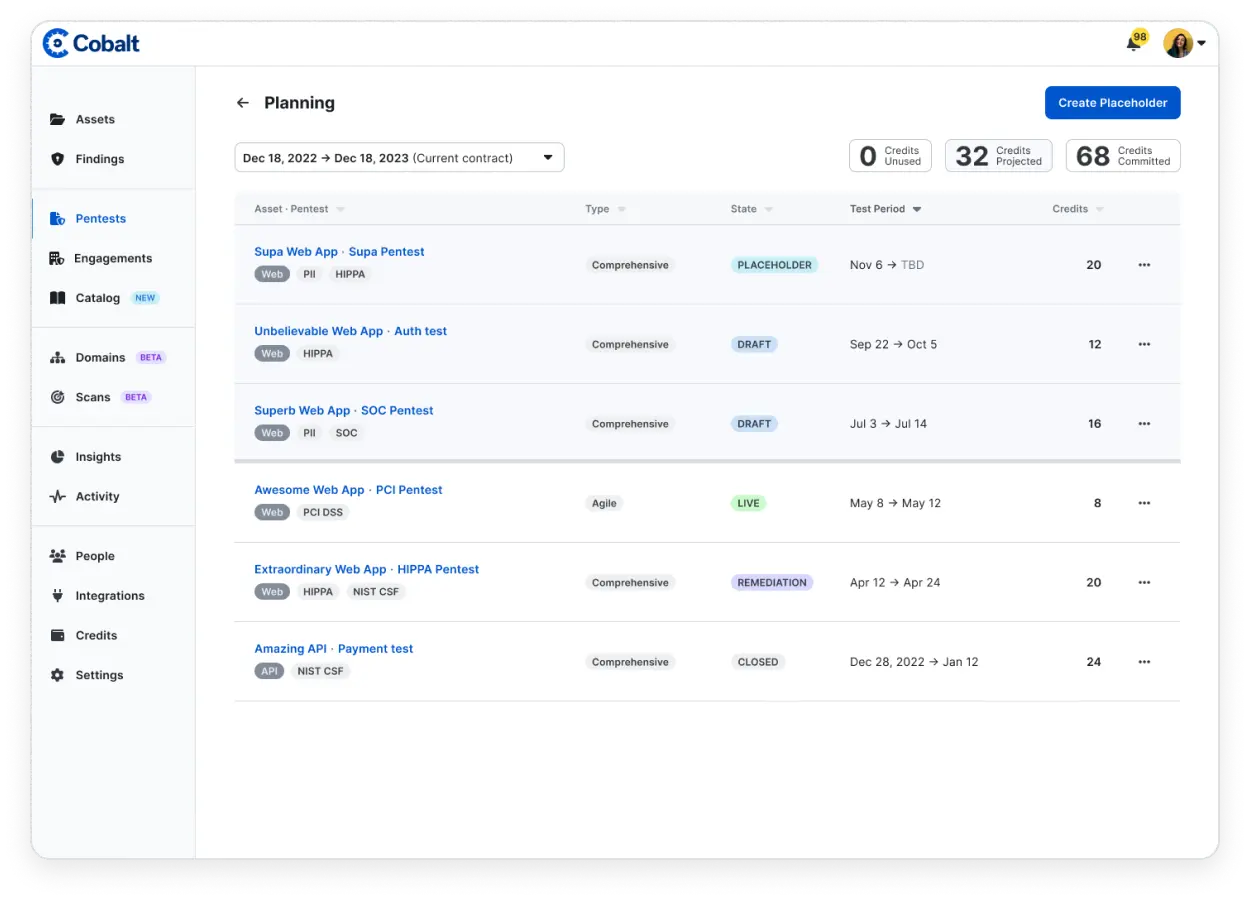

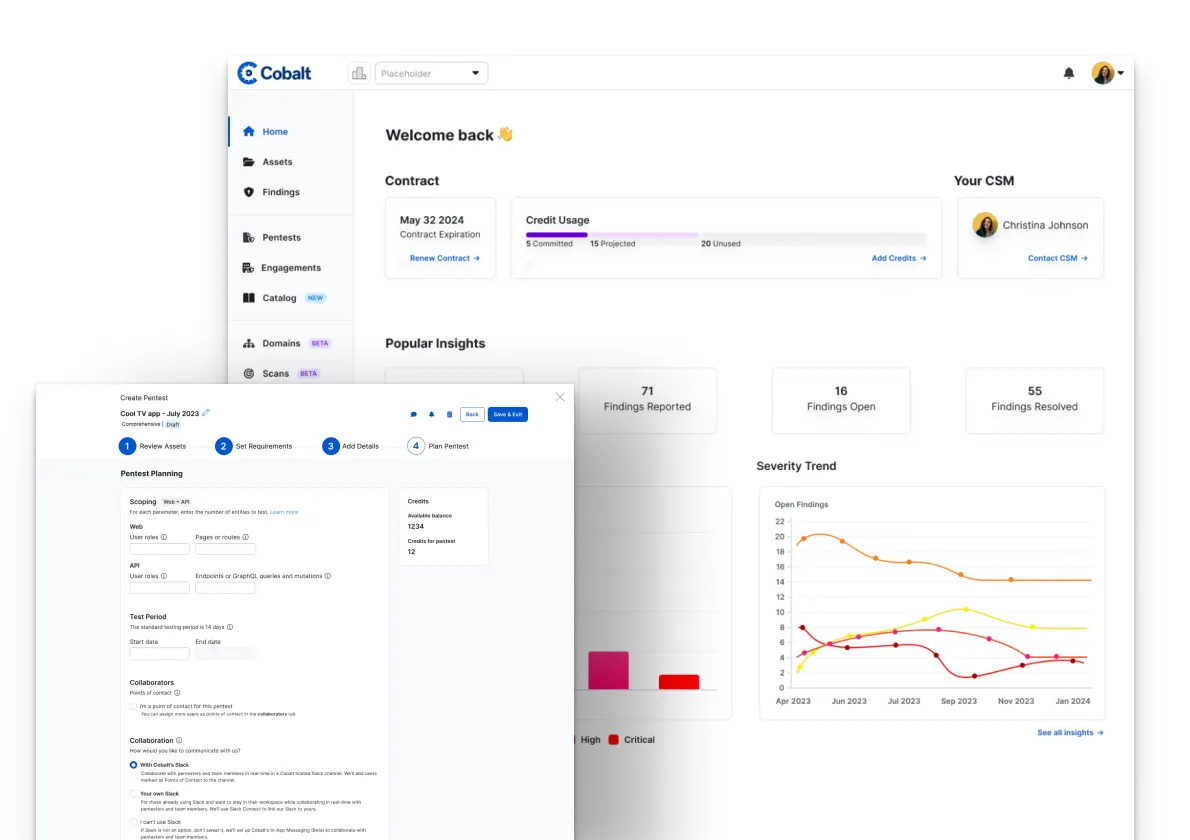

Launch new pentests rapidly with PtaaS and access to a pool of expert pentesters and the ability to start tests within 24 hours. Reuse stored asset data for subsequent tests and scale your security efforts effortlessly with our SaaS approach, catering to all testing requirements.

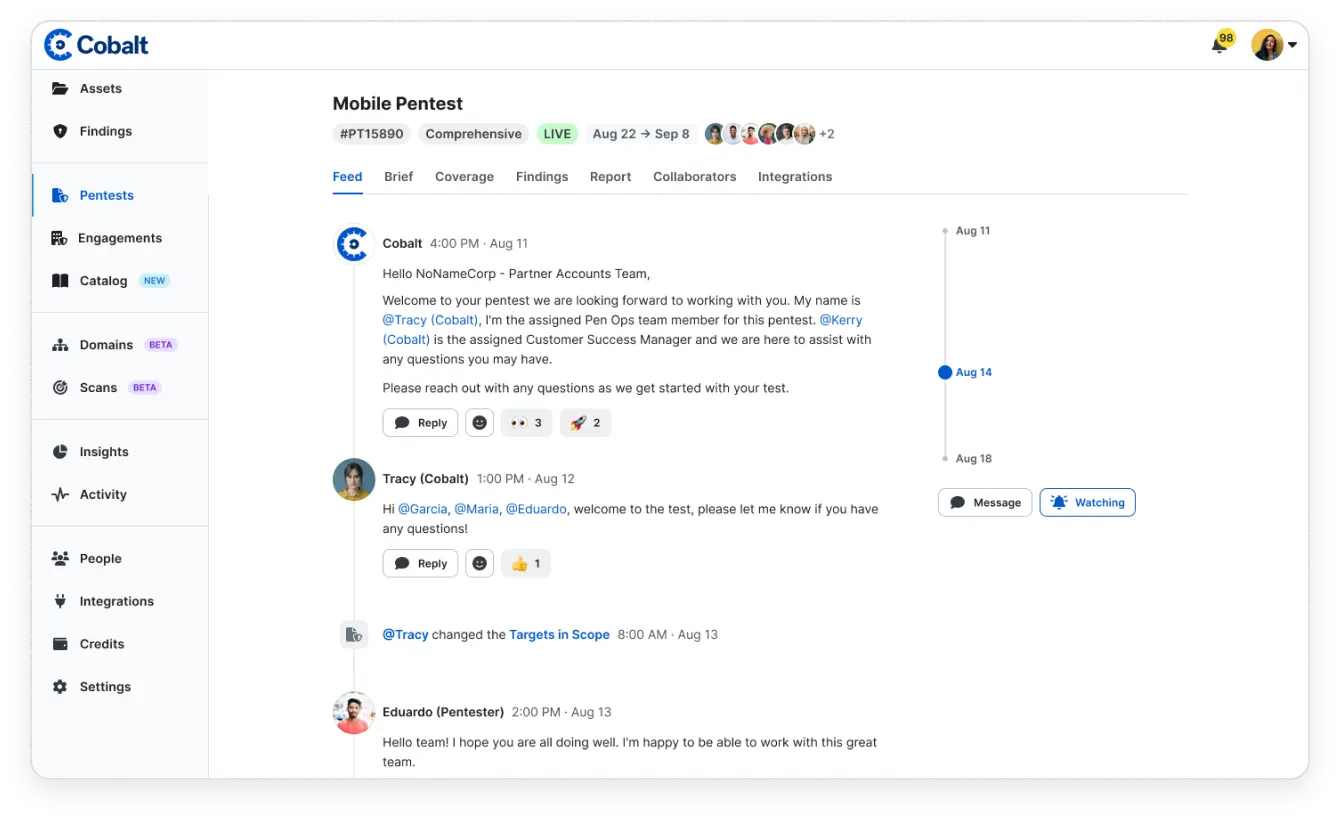

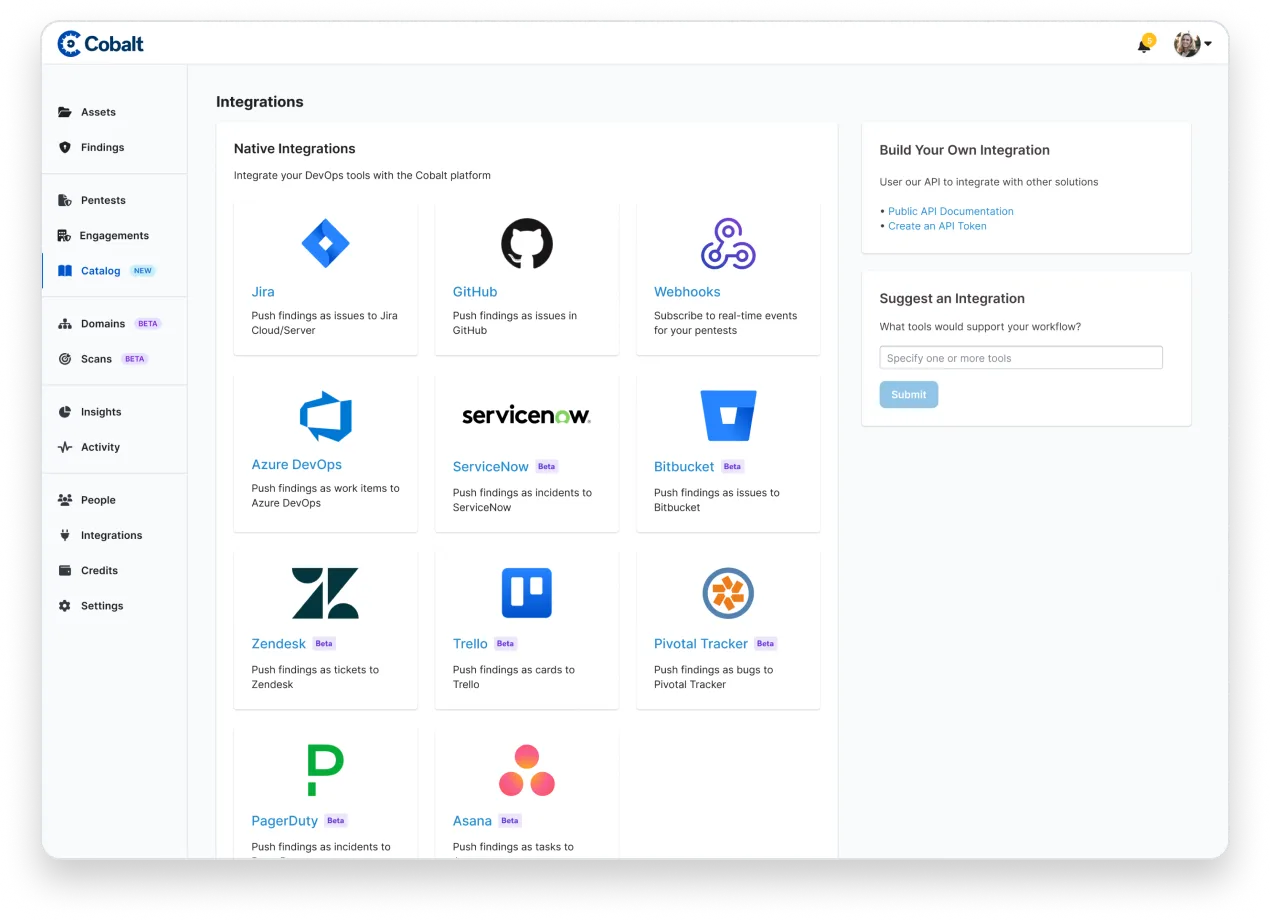

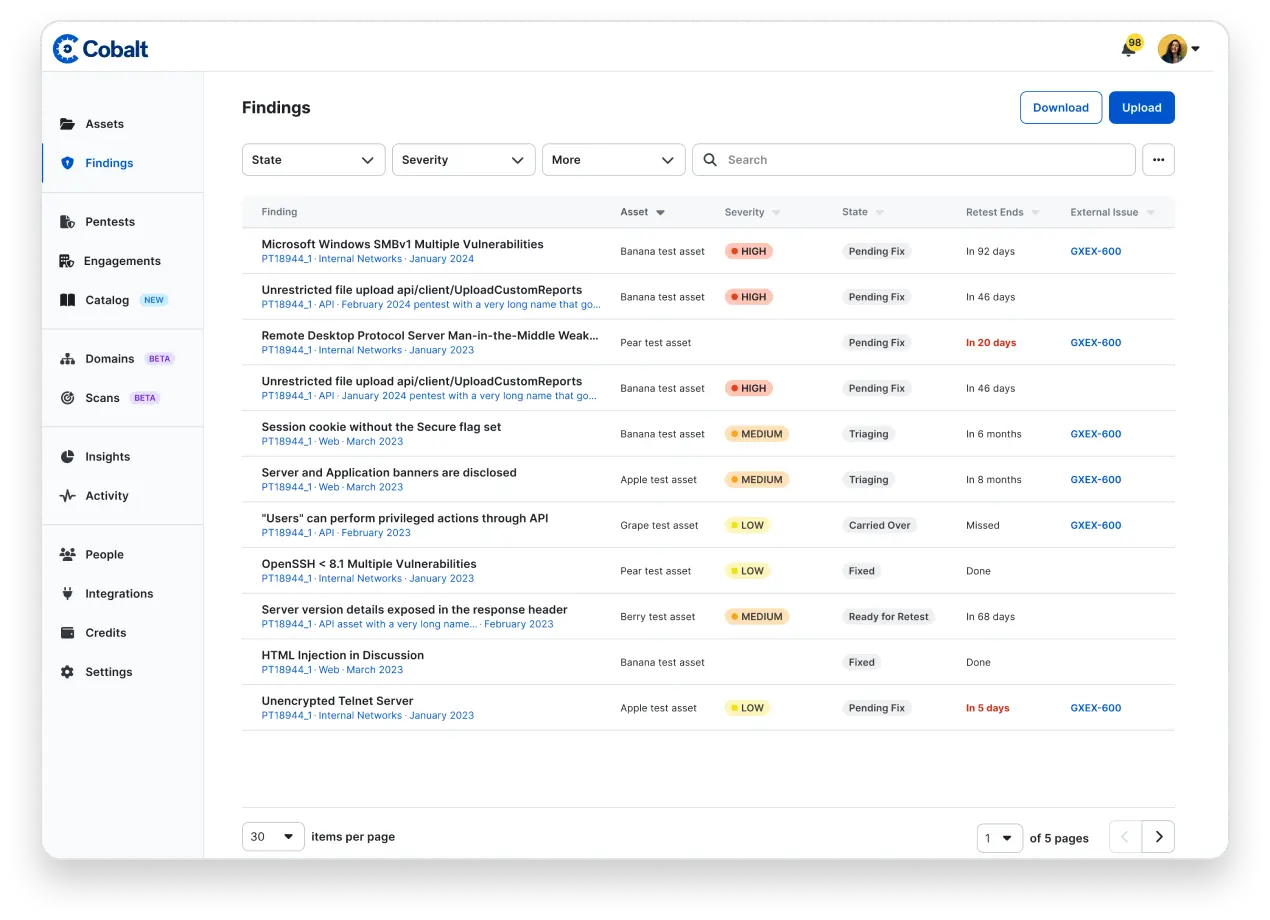

Get real-time results and work directly with your expert testers using built in integrations for communication and work management, including Jira, Azure DevOps, GitHub, and Slack.

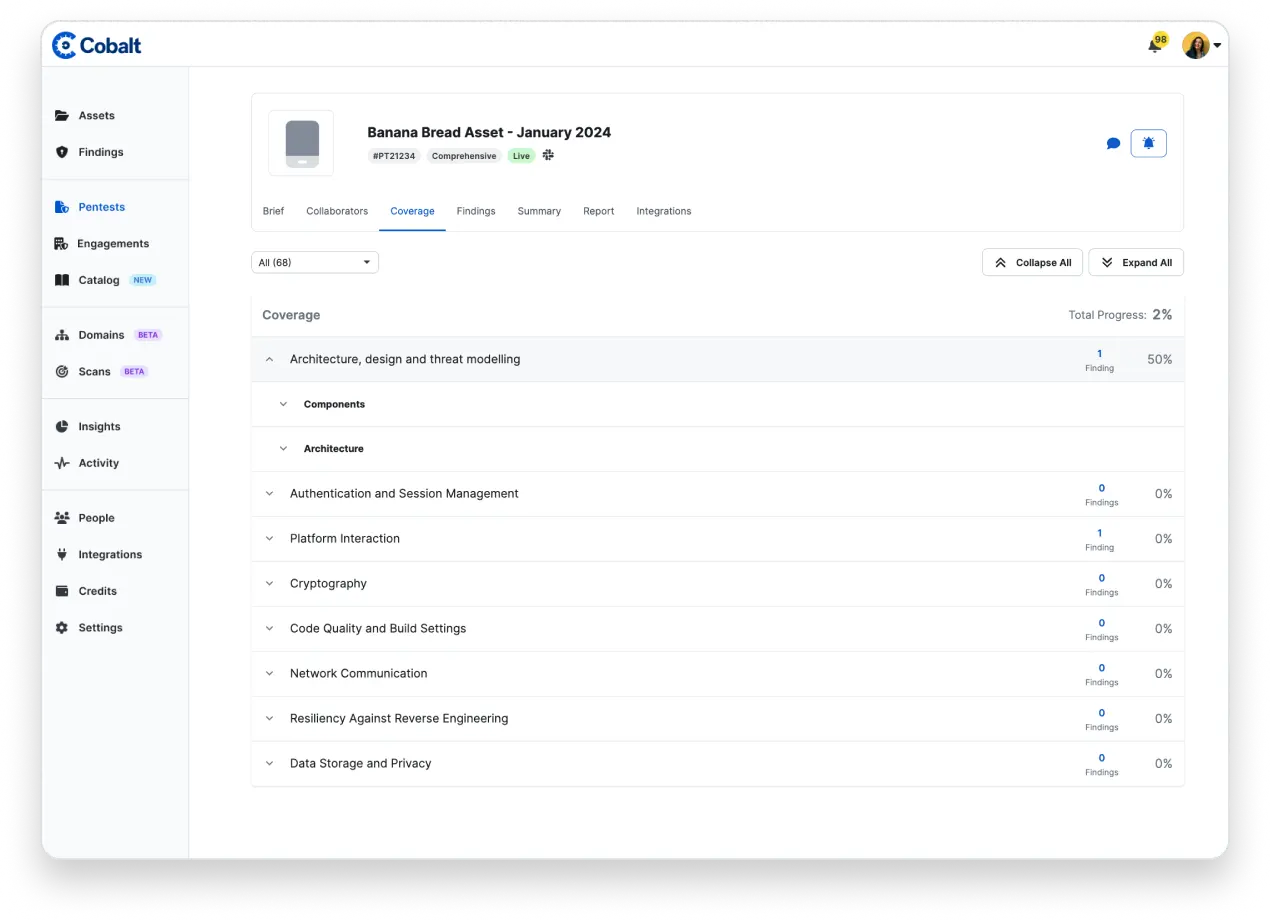

Unlock the full potential of your security testing with the Coverage Checklist. This list of security controls guides pentesters throughout the test for comprehensive coverage. From web applications and mobile apps to AI/LLMs and APIs, we have the correct testing checklist for your specific needs.

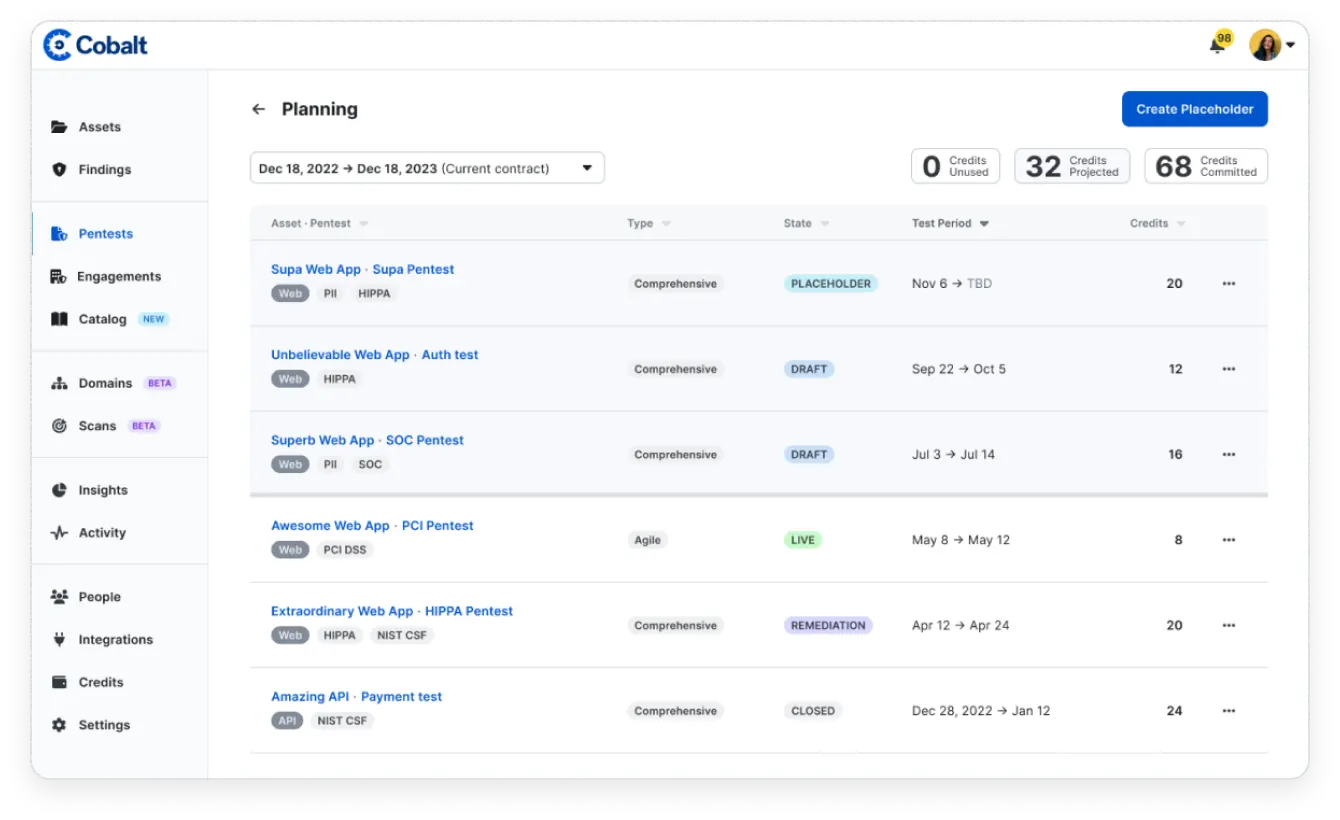

Businesses can actively monitor their tests' results over longer periods of time to identify trends, root causes, and opportunities for improvement. Better align with your SDLC by purchasing credits in advanced and ensure you're able to quickly launch a test as needed.

Seamlessly integrate with Jira, GitHub, or use the Cobalt API to relay the manual pentest findings to your development teams.

Proactively protect your apps by making pentesting an integral part of your application development lifecycle.

Tap into penetration testing that dives deeper into the code for more robust vulnerability identification and analysis. Combine expert human-driven testing and advanced automation for comprehensive coverage.

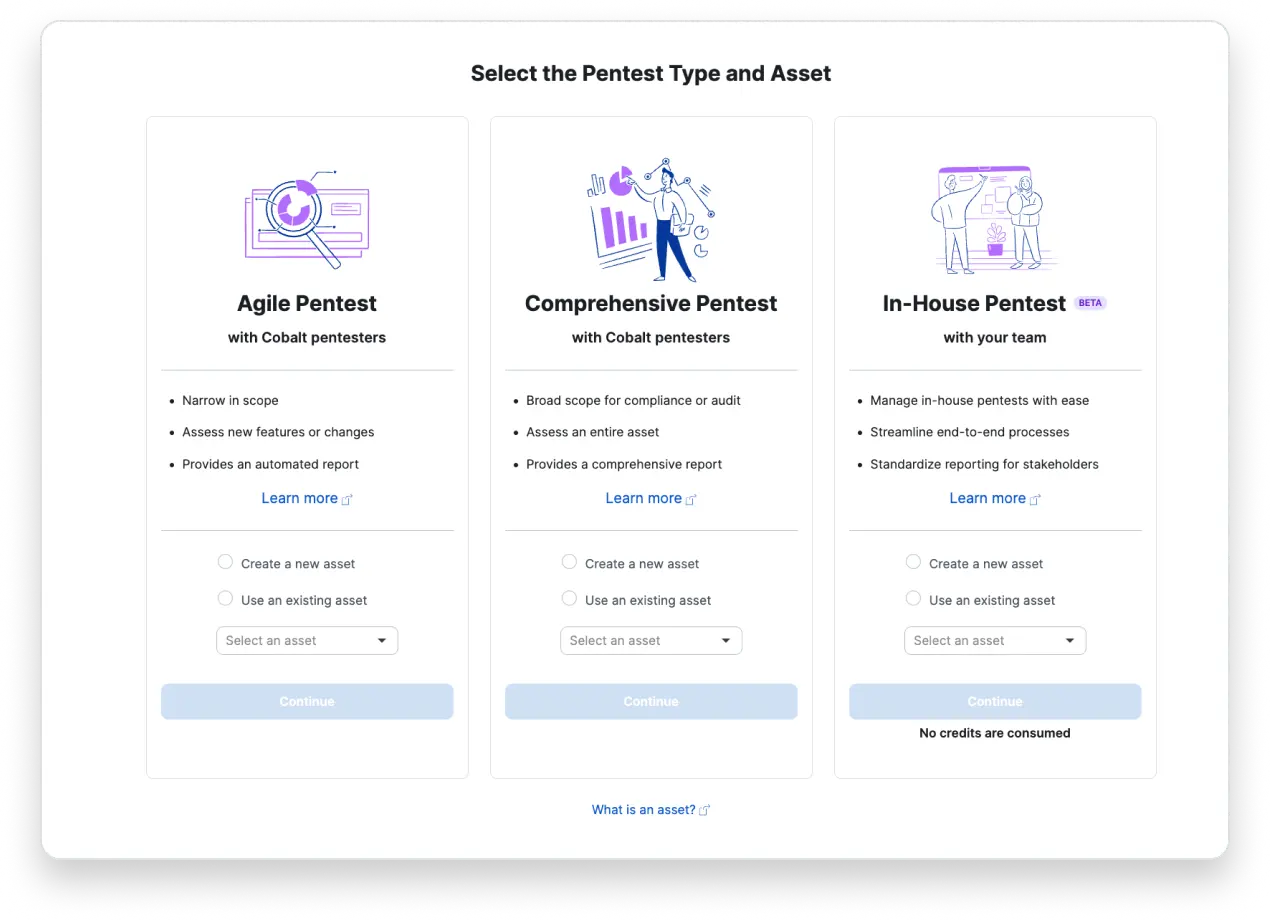

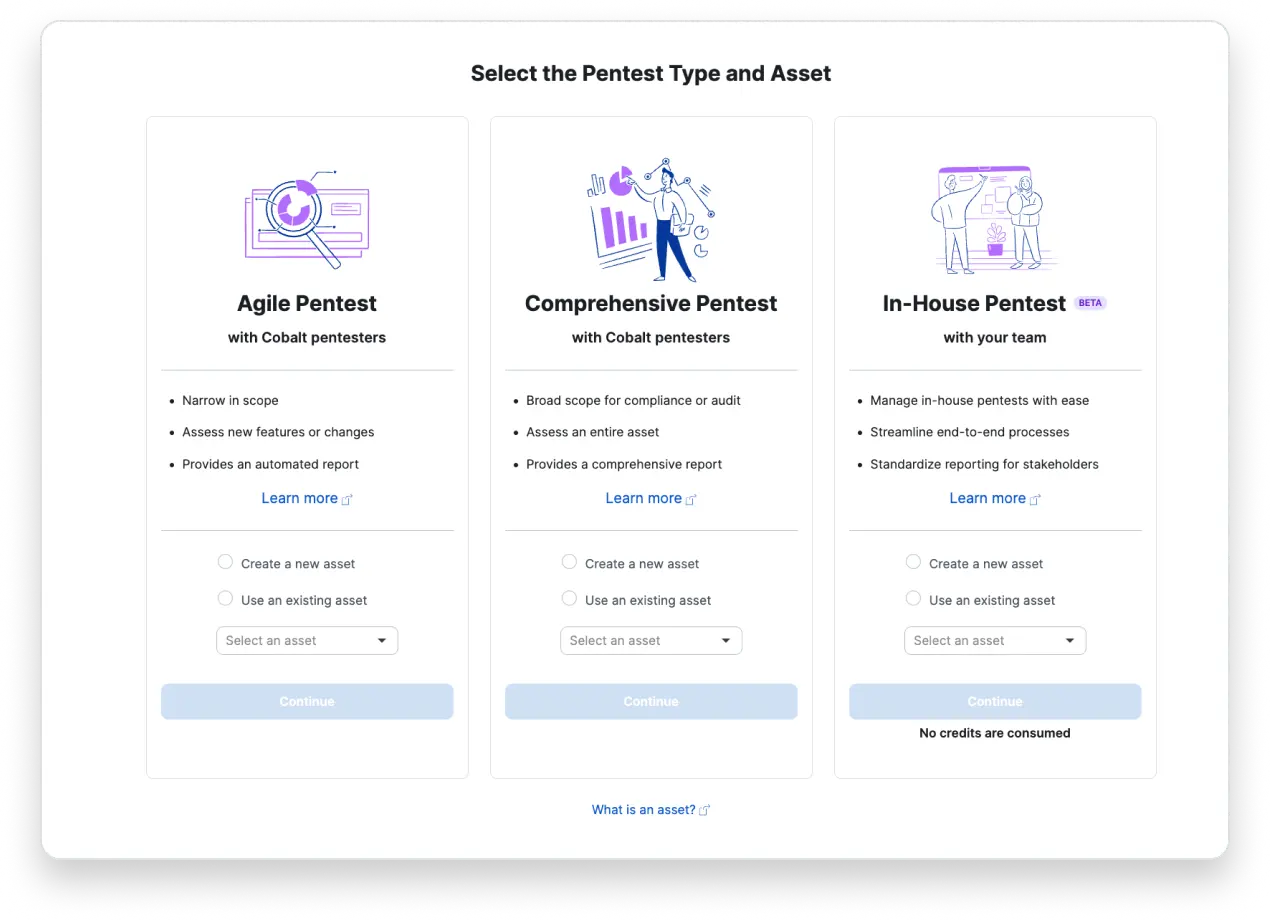

Test new releases. Perform testing on a single OWASP category. Or conduct microservice, delta, and exploitable vulnerability testing with the flexibility of agile pentesting.

Testing for business drivers. Perform pentesting for compliance. Or test for M&A activity with the extensive nature of comprehensive pentesting.

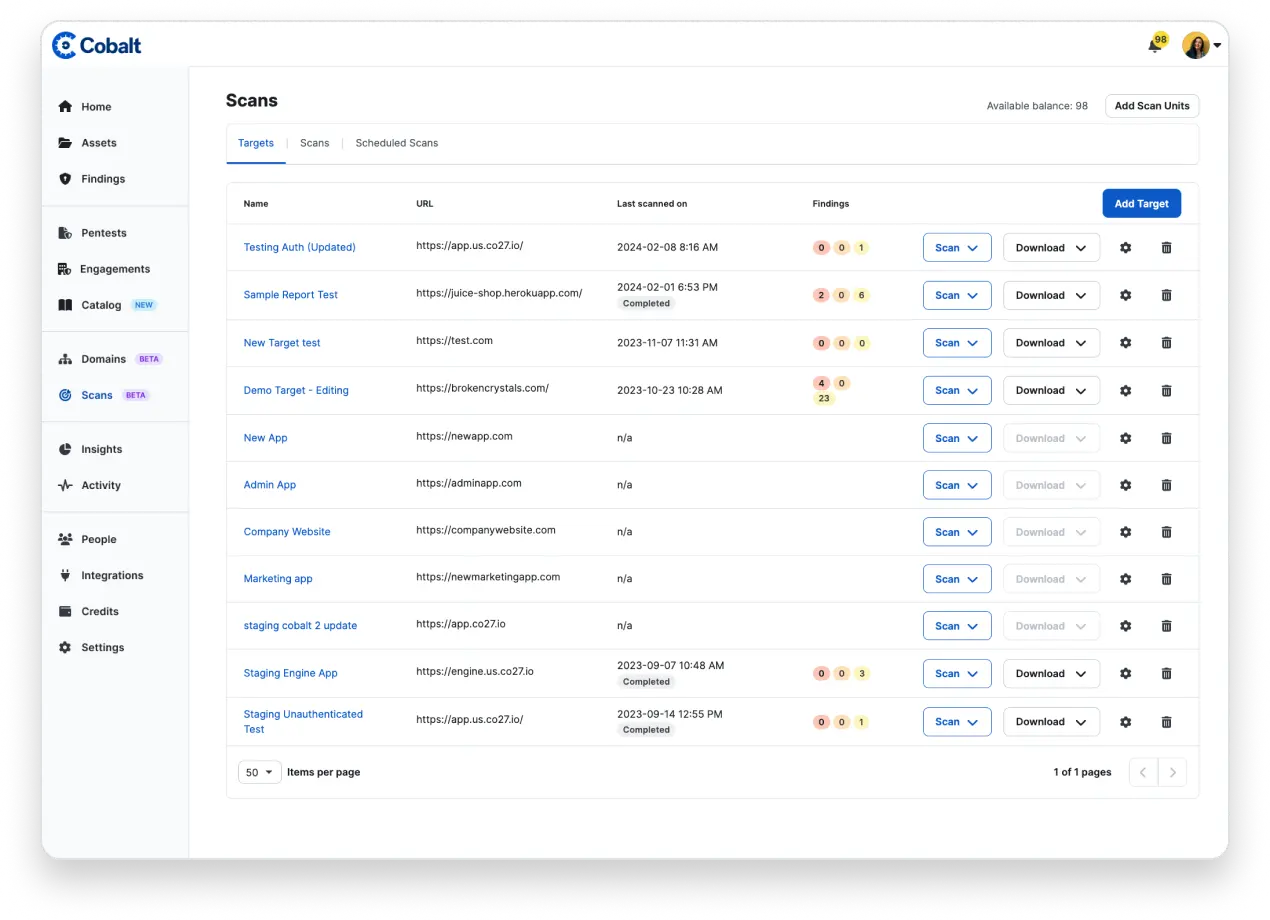

Combine application pentesting with Cobalt-native dynamic application security testing (DAST) and secure code review to maximize application security. By bringing together these solutions, you can get both point-in-time and continuous insight into risk.

Secure applications leveraging LLMs from the latest threats, including Prompt Injection Attacks. Your pentesting will be performed by security experts directly involved in the creation of the OWASP AI and LLM testing methodology.

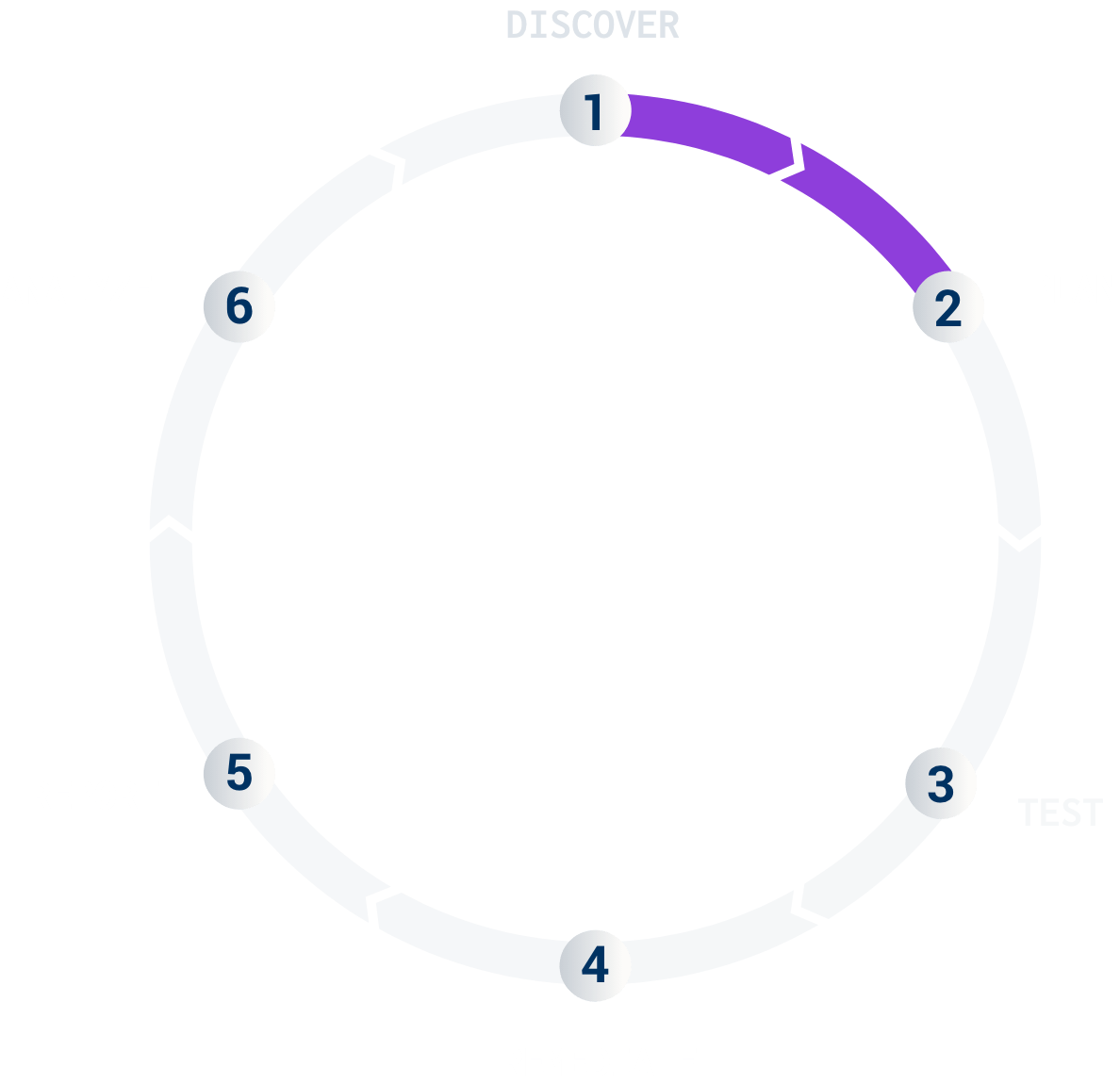

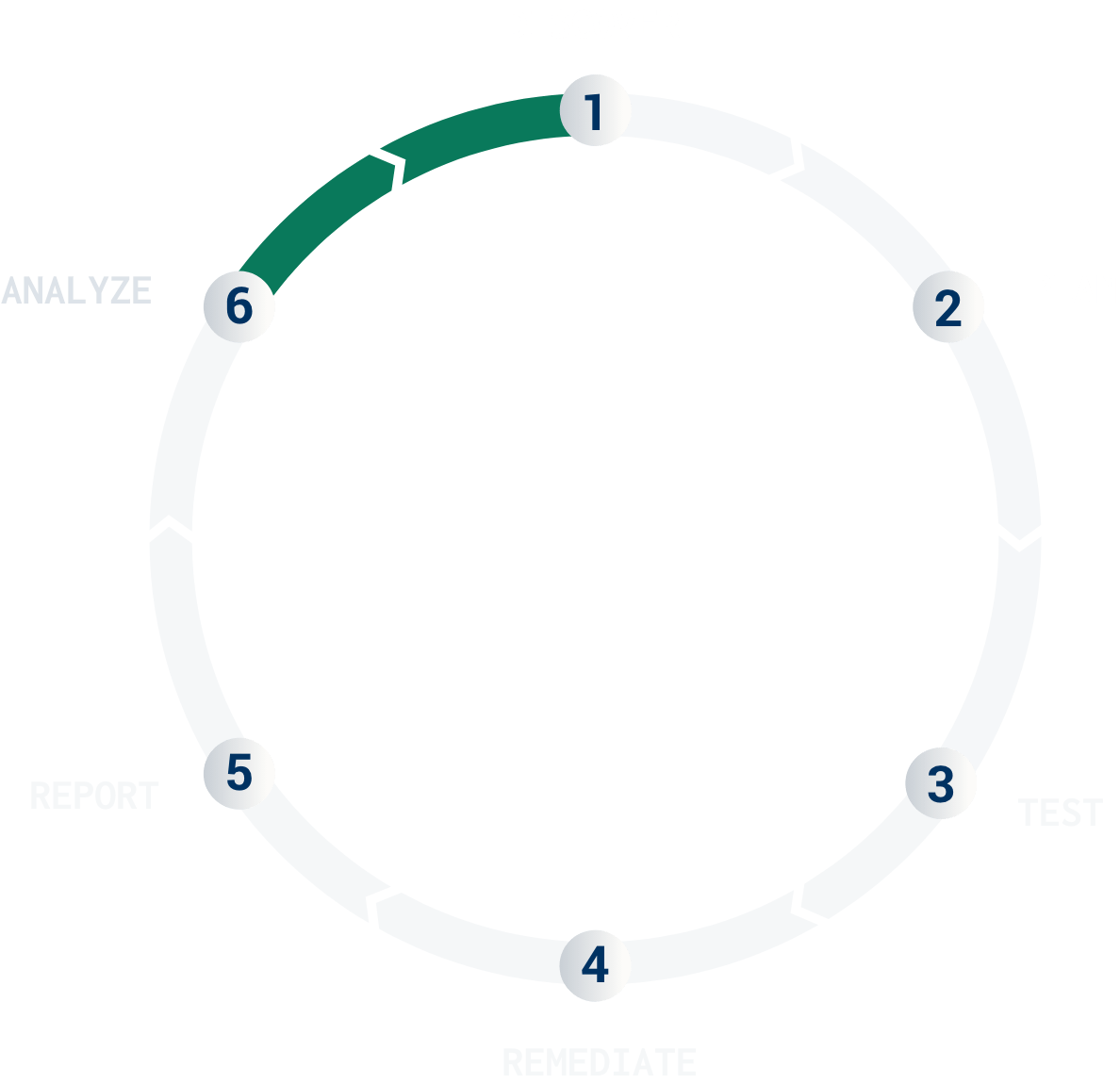

The first step in the Pentest as a Service process is the discovery phase where all parties involved prepare for the engagement. On the customer side, this involves mapping the attack surface areas and creating accounts on the Cobalt platform. The Cobalt PenOps Team assigns a Cobalt Core Lead and Domain Experts with skills that match your technology stack. A Slack channel is also created to simplify real-time communication between you and the Pentest Team.

For more information about this phase, check out

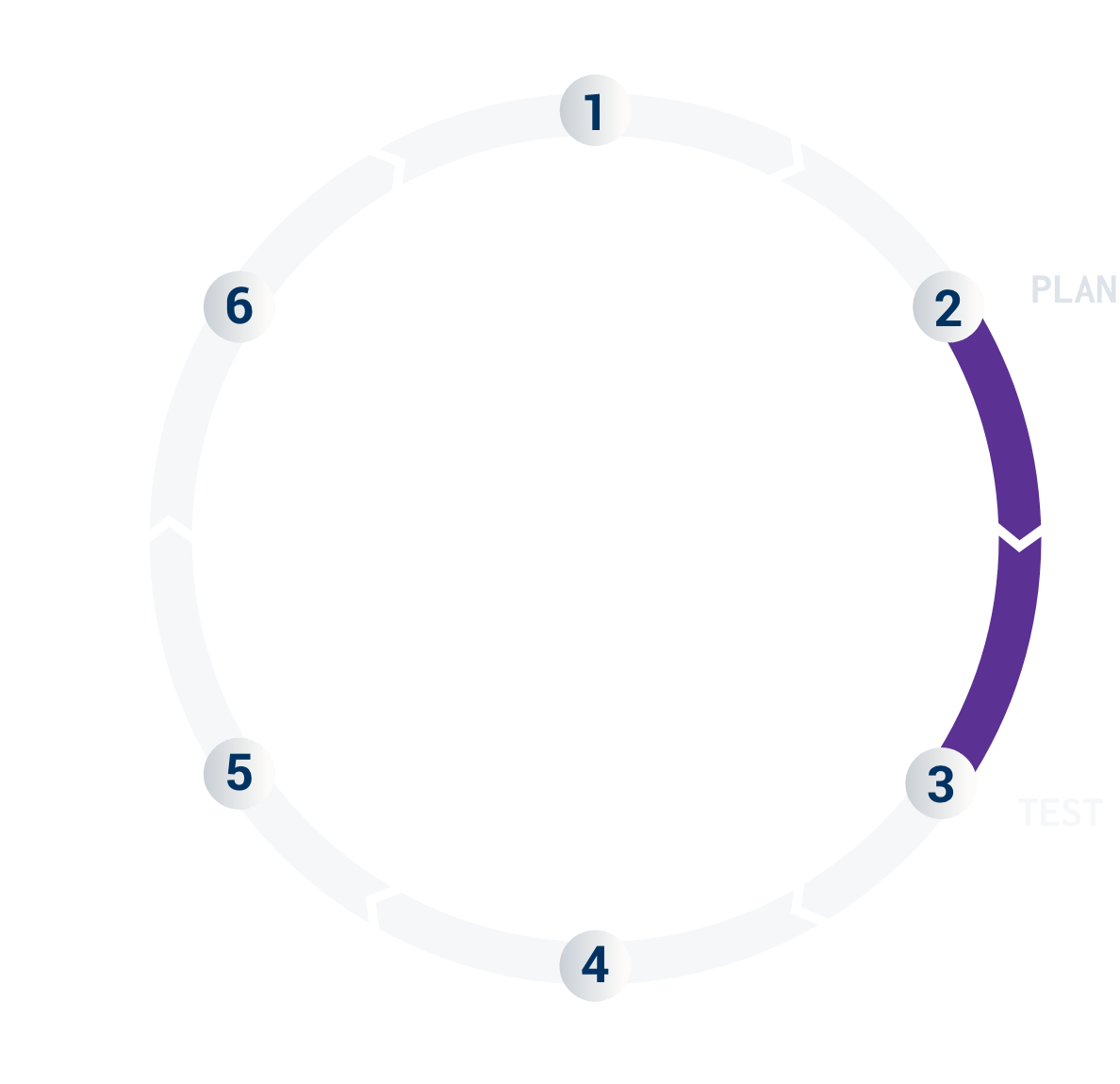

The second step is to strategically plan, scope, and schedule your pentest. This typically involves a 30-minute phone call with the Cobalt teams. The main purpose of the call is to offer a personal introduction, align on the timeline, and finalize the testing scope.

For more information about this phase, check out

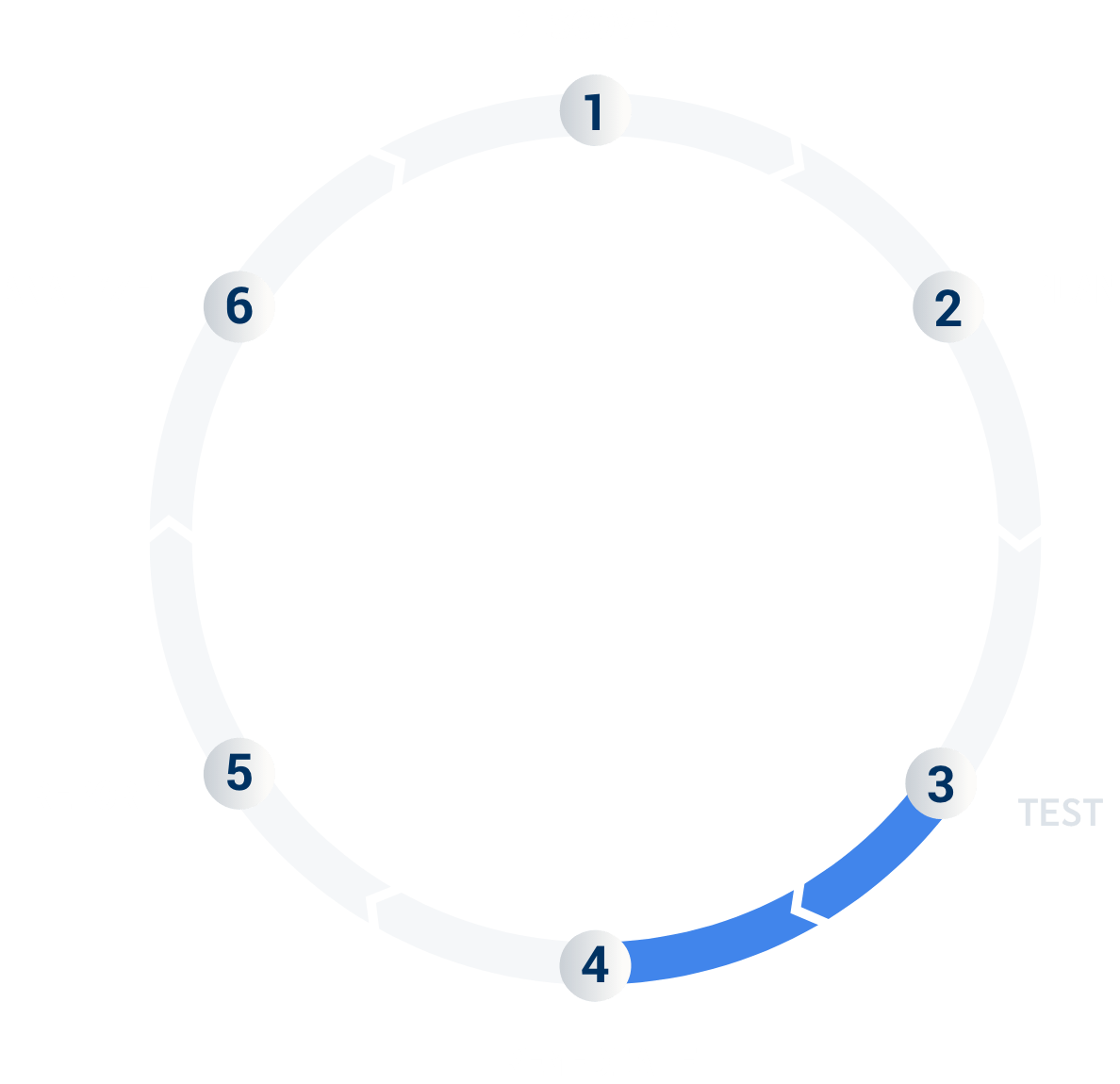

The third step is where the pentesting will take place. Steps 1 and 2 are necessary to establish a clear scope, identify the target environment, and set up credentials for the test. Now is the time for the experts to analyze the target for vulnerabilities and security flaws that might be exploited if not properly mitigated.

As the Pentest Team conducts testing, the Cobalt Core Lead ensures depth of coverage and communicates with your security team as needed via the platform and Slack channel. This is also where the true creative power of the Cobalt Core comes into play.

For more information about this phase, check out

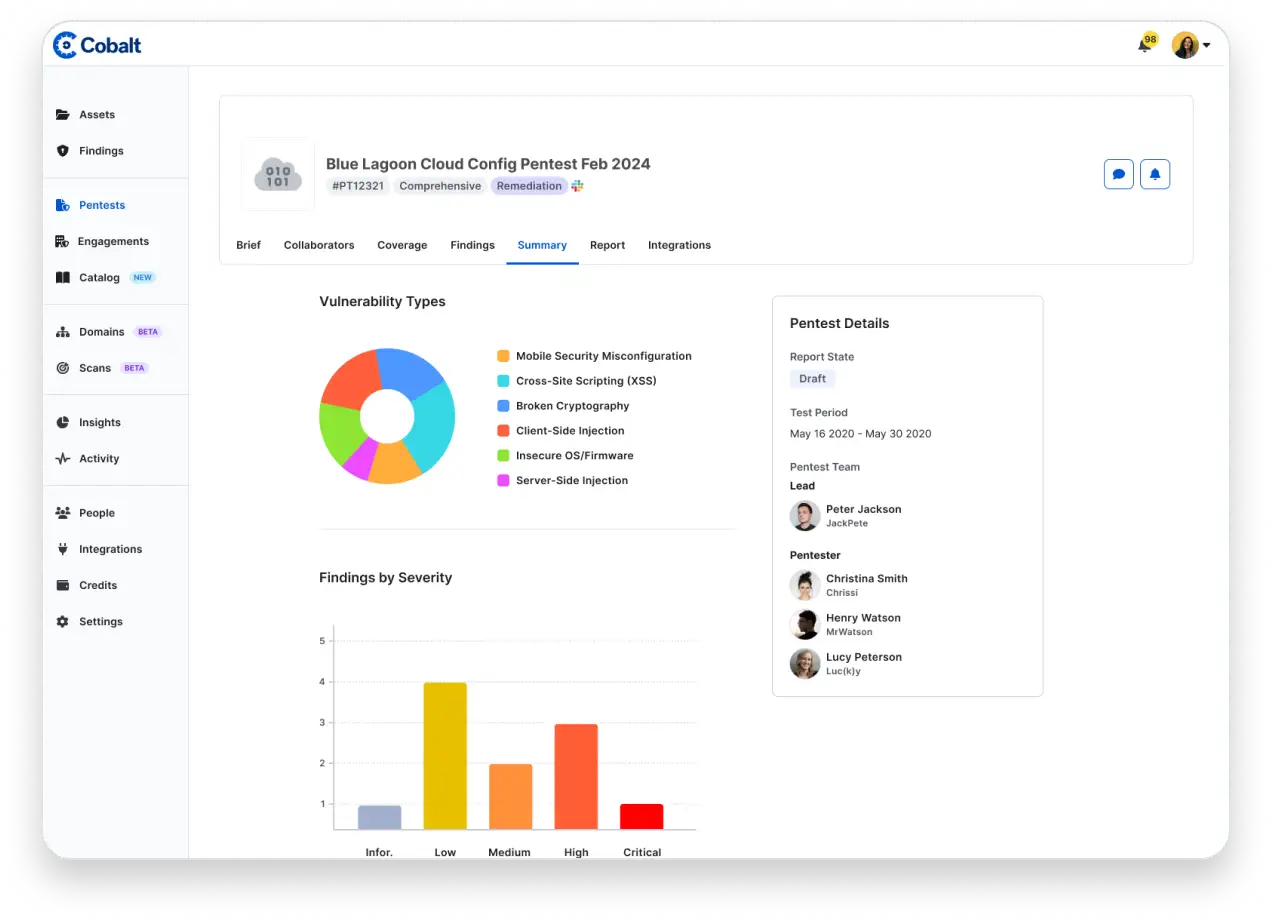

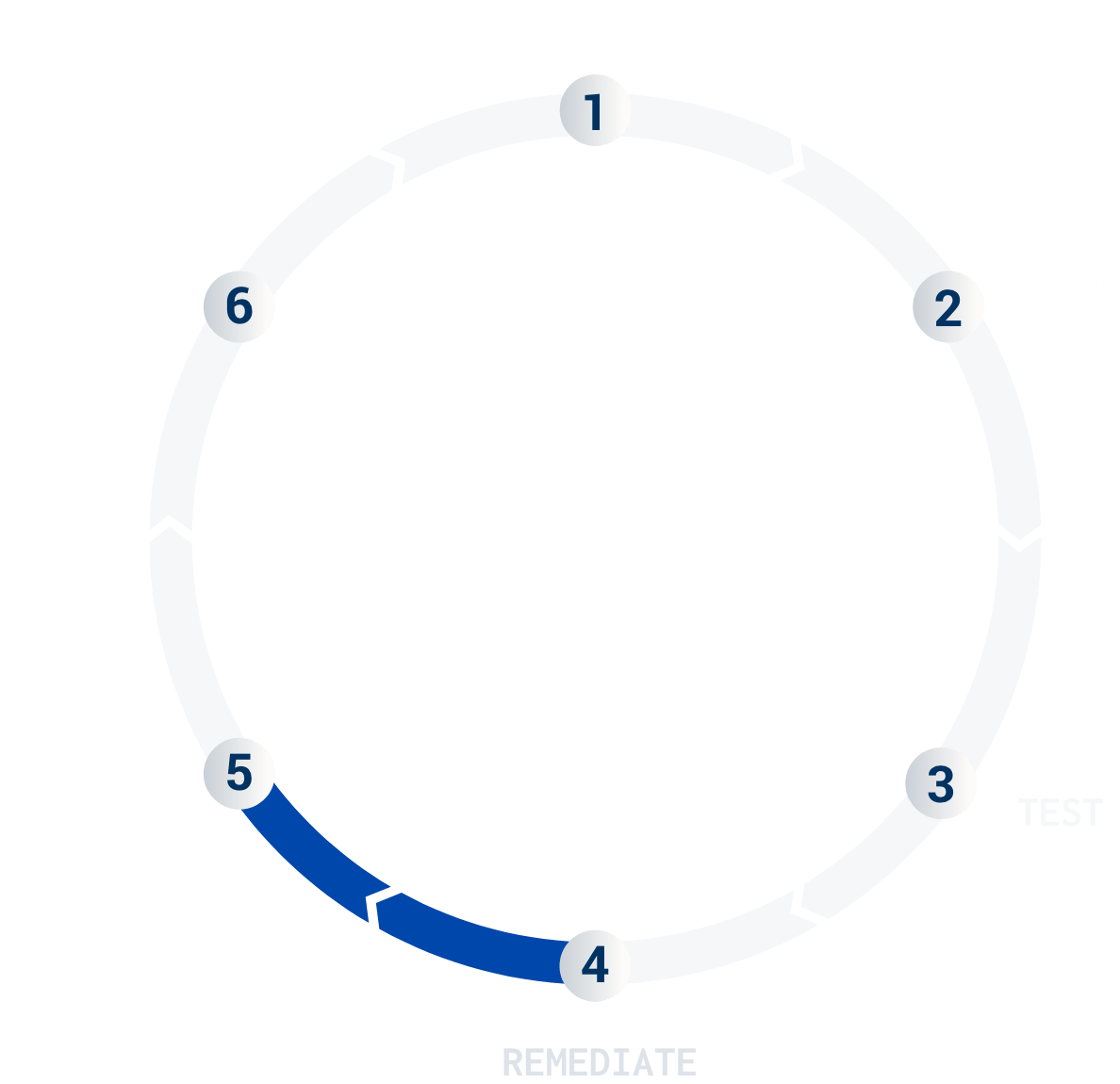

Accelerate your remediation with the fourth phase in the lifecycle. This phase is an interactive and on-going process, where individual findings are posted in the platform as they are discovered. Integrations send them directly to developers’ issue trackers, and teams can start patching immediately. At the end of your test, the Cobalt Core Lead reviews all the findings and produces a final summary report.

The report is not static; it's a living document that is updated as changes are made (see Re-Testing in Phase 5).

For more information about this phase, check out

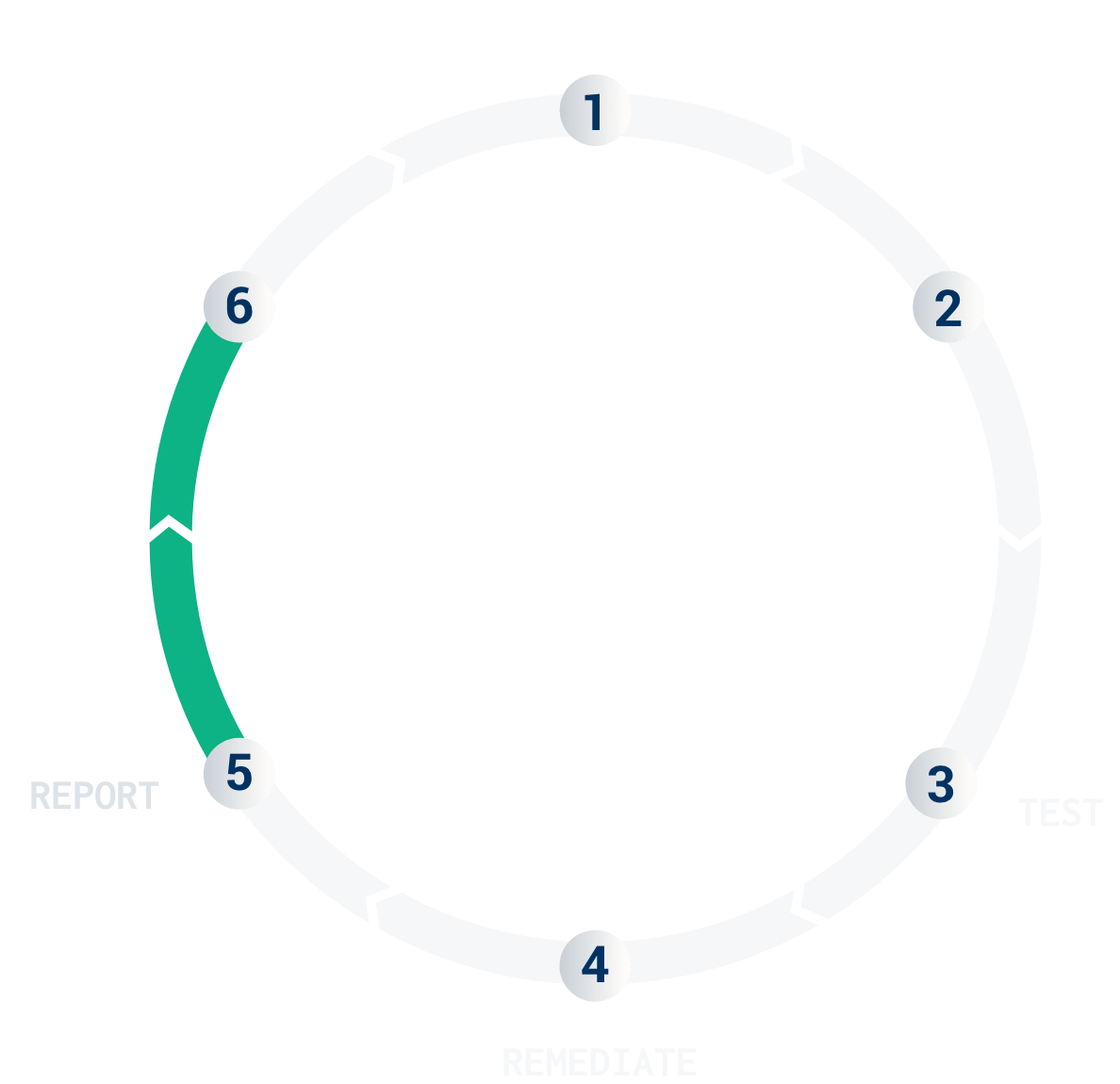

When you mark a finding as “Ready for Re-test” on the platform, the Cobalt Core Lead verifies the fix and updates the final report. Reports are available in different formats suited to various stakeholders, such as executive teams, auditors, and customers.

For more information about this phase, check out

Once the testing is complete, you have the opportunity to analyze your pentest results more thoroughly to inform and prioritize remediation actions.

At this phase, you benefit from a deep dive into the pentest report with insights comparing your risk profile against others globally, identifying common vulnerabilities to inform development teams, and driving your security program's maturity.

Furthermore, executive teams will be delighted by the ease of use to track and communicate pentest program performance.

For more information about this phase, check out

Explore the current state of AI adoption for cybersecurity and discover insights into how various organizations manage and minimize the risks of AI shortfalls with the SANS 2024 AI Survey.

Start testing in 24 hours. Connect directly with our security experts. And centralize your testing using the Cobalt platform. Trust the pioneers of PtaaS to optimize your cybersecurity across your entire attack surface.