Before you can choose the right approach to test your application security, you first need to understand your options — and the economics of those options. For example, there are a few different ways an organization can crowdsource their security testing. Launching a public bug bounty is one of those options, but whether it is a good fit depends on what you are trying to achieve. At AppSec USA 2016, I gave a talk around these tradeoffs which are discussed below.

The Balancing Act of Security Testing

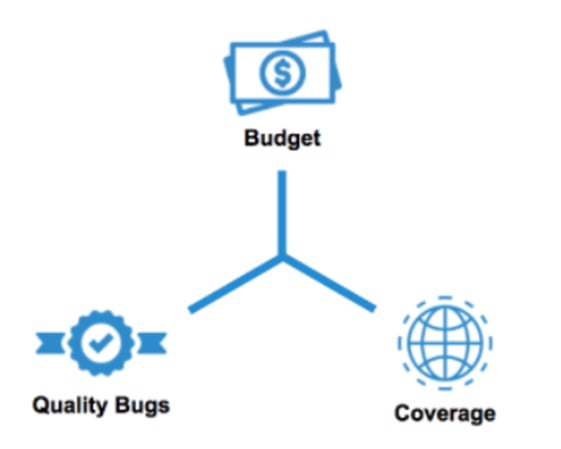

The goal of security testing is to identify as many significant bugs as possible across your products, given the budget you have available. At first glance, a public bug bounty program seems like a good way to optimize this balancing act. It seems logical that the more people you have testing your app, the more likely you are to find as many bugs as possible.

Balancing act of security testing

But all bugs are not created equal. Simple XSSs are relatively innocuous whereas more severe bugs, such as authentication flaws, can expose the entire application. A random process for bug discovery might not be the best approach to target the most critical bugs under budget constraints.

Separating the Signal from the Noise

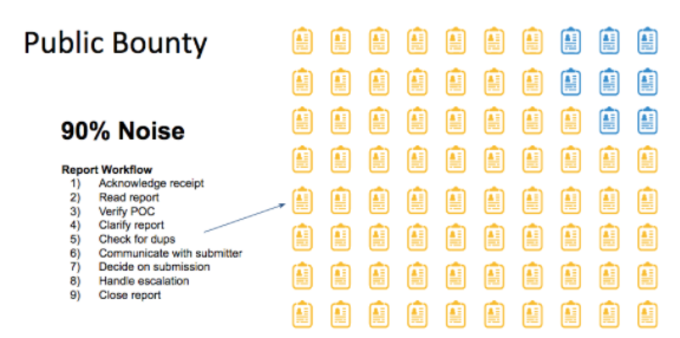

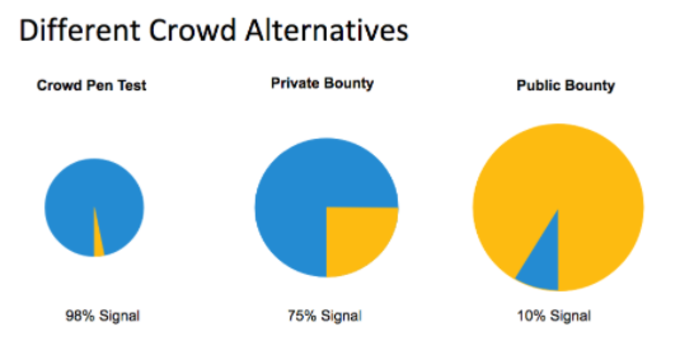

The biggest challenge in the public bug bounty approach is the low signal-to-noise ratio. Suppose there are 1,000 bounty hunters participating in a bug bounty program and each is submitting 10 reports. Out of the 10,000 reports submitted many will be duplicates of each other. Other submissions might simply reflect a misunderstanding of the software’s functionality. Currently, it’s a fair assumption that around 90% of reports submitted in a public bug bounty program are either invalid or duplicates.

Public bug bounty workflow and signal-to-noise

From the perspective of the security team there is a substantial administrative burden associated with managing all these incoming reports. In the example above, with 10,000 reports and 10% signal it means that the security team needs to filter out 9,000 duplicate reports.

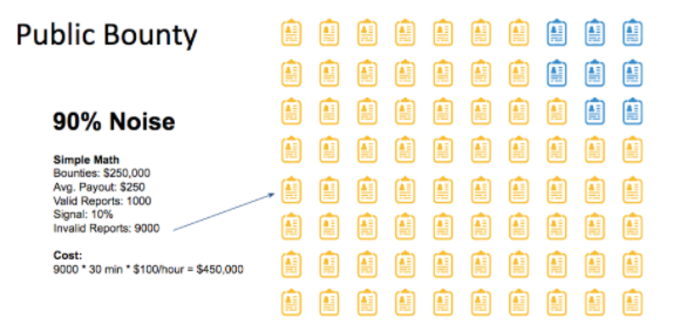

A Simple Business Case

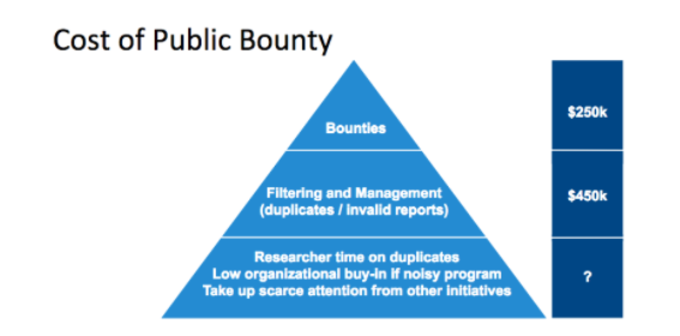

If 10% of the reports are valid, and you pay $250 on average per valid report the cost to bounties will be $250,000 (1000 * $250). This total of the bounty payments may seem like a reasonable cost given the value of the bugs that are surfaced.

However, the cost to bounties is just the tip of the iceberg of the total cost of running the program. Only 10% of the reports are valid, but there is a cost to filter out the 90% of invalid reports. If we assume that it will take on average 30 minutes to review a report and decide whether it’s valid or invalid and assuming an hourly salary of $100, then the cost of reviewing the 9,000 reports would be $450,000 — or almost twice the cost of bounty payments.

Public bug bounty ROI calculation

Naturally this doesn’t include the cost of acting on any of the verified bugs — prioritizing them, fixing the high-priority ones, and retesting the new code. Furthermore, the full accounting of the bug bounty program cost should also include “soft” costs, such as the risk of low organizational buy-in if your bug bounty program produces too much noise, as well as maintaining the morale and engagement of the best bug hunters if their reports are consistently marked as duplicates.

Cost of public bug bounty

Some companies have improved the signal-to-noise ratio by making the bug bounty program private, limiting participation to a smaller group of selected bug-hunters. This will improve the signal, but it still leaves room for substantial improvement.

A Targeted Crowdsourced Alternative

While bug bounty programs are sometimes appropriate, there are crowdsourced security alternatives which are often better suited in a range of situations. In the blog post Deconstructing Bug Bounties, I compare and contrast some of these different approaches. One approach is crowdsourced penetration testing. Rather than bringing in hundreds or thousands of participants, we use a small group, typically three security professionals, who work collaboratively together to find vulnerabilities.

The small number of participants lowers the program administration and allows for a more collaborative approach to testing in which security testers have visibility into other findings, thereby reducing the number of duplicate reports.

In a crowdsourced pen test, the process is far more focused on the activities that move the needle on the real objectives of the process: finding, verifying, and addressing real bugs. With a higher signal-to-noise ratio, far less of the cost goes toward the overhead for administrative tasks.

Crowdsourced security testing

Alternative Alternatives

You might be asking: Well, if reducing the participants is an effective strategy, why not take it a step further by hiring a single full-time tester on staff? And the answer is that sometimes that will, indeed, make sense. However, the need for software testing will often ebb and flow; you might need a lot of testing bandwidth towards the end of a software development cycle, and little or none at other times.

Multiple perspectives can also be invaluable. With too many people involved, the signal risks being drowned in a cacophony of noise. But a small hand-picked team can offer the best of both worlds: diverse expertise coupled with a strong signal with limited noise.

There’s no one-size-fits-all solution for application security testing — but a quick back-of-the-envelope ROI calculation can steer you to a better option. We often find that a periodic or continuous pen testing setup delivered collaboratively by freelance researchers, yields a higher ROI and better coverage than traditional public bug bounties.

Want to read more?

Check out this post also about the future of application security if you are interested in learning more.